1 MySQL安装

- ==卸载安装均需要在root用户身份下==

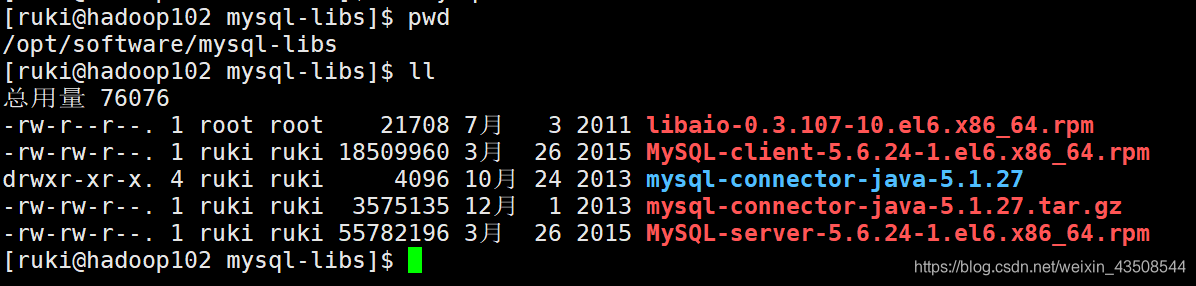

1.1 安装包准备

- 查看mysql是否安装,如果安装,先卸载(需要在

root角色下)

#查看

rpm -qa|grep mysql#卸载

rpm -e --nodeps mysql-libs-5.1.73-7.el6.x86_64- 解压

mysql-libs.zip文件到/opt/software目录

unzip mysql-libs.zip

1.2 安装mysql服务器

安装mysql服务端(在

/opt/software/mysql-libs目录下)- 如果安装报错(博主遇到了),参考这篇文章:https://blog.csdn.net/qq_42191775/article/details/103939104

rpm -ivh MySQL-server-5.6.24-1.el6.x86_64.rpm- 查看产生的随机密码(之后改密码需要用)

cat /root/.mysql_secret- 查看mysql状态

service mysql status- 启动mysql

service mysql start1.3 安装mysql客户端

- 安装mysql客户端(在

/opt/software/mysql-libs目录下)

rpm -ivh MySQL-client-5.6.24-1.el6.x86_64.rpm- 连接Mysql

mysql -uroot -p[步骤1.2.2得到的随机密钥]- 修改密码

set password=password('root')- 退出

exit1.4 mysql中主机配置(user表)

配置只要是root 用户+密码,在任何主机上都能登录MySQL 数据库。

- 进入mysql

mysql -uroot -proot- 显示数据库

show databases;- 使用mysql数据库

use mysql;

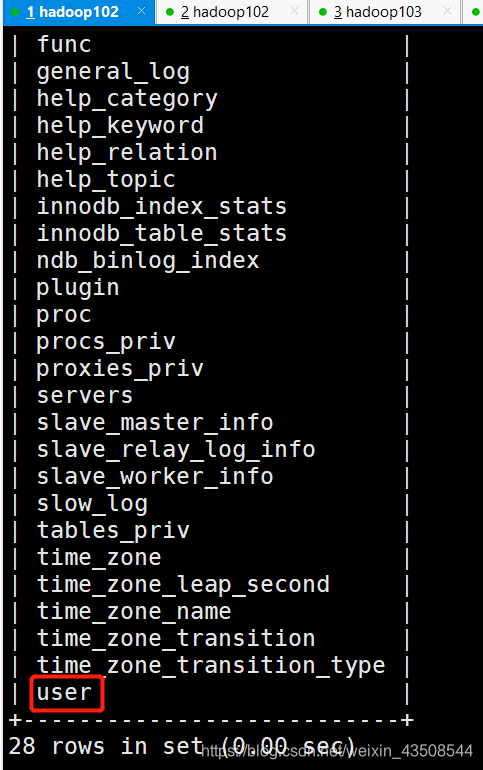

- 显示mysql中的所有表

show tables;

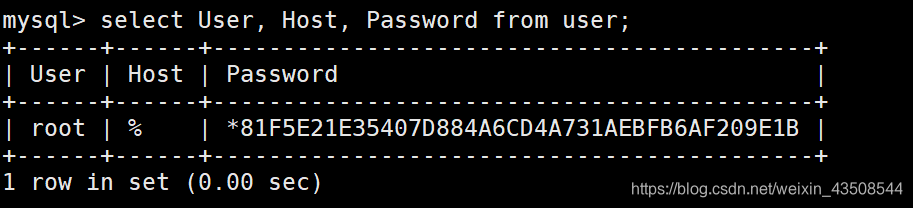

- 查询user表

select User, Host, Password from user;- 修改user 表,把Host 表内容修改为%

update user set host='%' where host='localhost';- 删除root用户其他host

delete from user where Host='hadoop102';

delete from user where Host='127.0.0.1';

delete from user where Host='::1';- 刷新

flush privileges;

- 退出

quit2 Sqoop的安装

2.1 安装sqoop

- 上传安装包

sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar.gz到hadoop102 的/opt/software路径中 - 将安装包解压到

/opt/module

tar -zxf sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar.gz -C /opt/module/- 修改文件夹的名字(

/opt/module)

mv sqoop-1.4.6.bin__hadoop-2.0.4-alpha/ sqoop2.2 修改配置文件

- 进入到

/opt/module/sqoop/conf目录,重命名配置文件

mv sqoop-env-template.sh sqoop-env.sh- 修改配置文件

#增加如下内容

export HADOOP_COMMON_HOME=/opt/module/hadoop-2.7.2

export HADOOP_MAPRED_HOME=/opt/module/hadoop-2.7.2

export HIVE_HOME=/opt/module/hive

export ZOOKEEPER_HOME=/opt/module/zookeeper-3.4.10

export ZOOCFGDIR=/opt/module/zookeeper-3.4.10/conf

export HBASE_HOME=/opt/module/hbase2.3 拷贝JDBC驱动

- 进入到

/opt/software/mysql-libs路径,解压mysql-connector-java-5.1.27.tar.gz到当前路径

tar -zxvf mysql-connector-java-5.1.27.tar.gz- 进入到

/opt/software/mysql-libs/mysql-connector-java-5.1.27路径,拷贝jdbc 驱动到sqoop的lib目录下。

cp mysql-connector-java-5.1.27-bin.jar /opt/module/sqoop/lib/2.4 测试Sqoop是否能连接数据库

bin/sqoop list-databases --connect jdbc:mysql://hadoop102:3306/ --username root --password root

3 业务数据的生成

- 在hadoop102 的

/opt/module/目录下创建db_log文件夹

mkdir db_log- 把

gmall-mock-db-2020-03-16-SNAPSHOT.jar和application.properties上传到hadoop102的/opt/module/db_log路径上。 根据需求修改

application.properties相关配置- 通过修改

mock.date=2020-03-11,生成那天的数据 - 通过修改

mock.clear=0,删除原有的数据,生成新的随机数据

- 通过修改

logging.pattern.console=%m%n

logging.level.root=info

spring.datasource.driver-class-name=com.mysql.jdbc.Driver

spring.datasource.url=jdbc:mysql://hadoop102:3306/gmall?characterEncoding=utf-8&useSSL=false&serverTimezone=GMT%2B8

spring.datasource.username=root

spring.datasource.password=root

logging.pattern.console=%m%n

mybatis-plus.global-config.db-config.field-strategy=not_null

#业务日期

mock.date=2020-03-11

#是否重置 1表示重置 0表示不重置

mock.clear=0

#是否生成新用户

mock.user.count=50

#男性比例

mock.user.male-rate=20

#收藏取消比例

mock.favor.cancel-rate=10

#收藏数量

mock.favor.count=100

#购物车数量

mock.cart.count=10

#每个商品最多购物个数

mock.cart.sku-maxcount-per-cart=3

#用户下单比例

mock.order.user-rate=80

#用户从购物中购买商品比例

mock.order.sku-rate=70

#是否参加活动

mock.order.join-activity=1

#是否使用购物券

mock.order.use-coupon=1

#购物券领取人数

mock.coupon.user-count=10

#支付比例

mock.payment.rate=70

#支付方式 支付宝:微信 :银联

mock.payment.payment-type=30:60:10

#评价比例 好:中:差:自动

mock.comment.appraise-rate=30:10:10:50

#退款原因比例:质量问题 商品描述与实际描述不一致 缺货 号码不合适 拍错 不想买了 其他

mock.refund.reason-rate=30:10:20:5:15:5:5- 并在该目录下执行,如下命令,生成2020-03-10 日期数据:

java -jar gmall-mock-db-2020-03-16-SNAPSHOT.jar4 同步策略

4.1 全量同步策略

- 每天存储一份完整的数据,适用于数据量不大,且每天既有新数据插入,也会有旧数据修改的场景。

4.2 增量同步数据

- 每天存储一份增量数据,适用于数据量大,且每天只会有新数据插入的场景。

4.3 新增及变化策略

- 存储创建时间和操作时间都是当天的数据

4.4 特殊策略

- 只同步一遍就可以的数据。如客观世界维度,日期维度,地区维度的数据。

5 mysql->sqoop->hdfs脚本编写

- 在

/home/atguigu/bin目录下创建mysql_to_hdfs.sh

#! /bin/bash

sqoop=/opt/module/sqoop/bin/sqoop

do_date=`date -d '-1 day' +%F`

if [[ -n "$2" ]]; then

do_date=$2

fi

import_data(){

$sqoop import \

--connect jdbc:mysql://hadoop102:3306/gmall \

--username root \

--password root \

--target-dir /origin_data/gmall/db/$1/$do_date \

--delete-target-dir \

--query "$2 and \$CONDITIONS" \

--num-mappers 1 \

--fields-terminated-by '\t' \

--compress \

--compression-codec lzop \

--null-string '\\N' \

--null-non-string '\\N'

hadoop jar /opt/module/hadoop-2.7.2/share/hadoop/common/hadoop-lzo-0.4.20.jar com.hadoop.compression.lzo.DistributedLzoIndexer /origin_data/gmall/db/$1/$do_date

}

import_order_info(){

import_data order_info "select

id,

final_total_amount,

order_status,

user_id,

out_trade_no,

create_time,

operate_time,

province_id,

benefit_reduce_amount,

original_total_amount,

feight_fee

from order_info

where (date_format(create_time,'%Y-%m-%d')='$do_date'

or date_format(operate_time,'%Y-%m-%d')='$do_date')"

}

import_coupon_use(){

import_data coupon_use "select

id,

coupon_id,

user_id,

order_id,

coupon_status,

get_time,

using_time,

used_time

from coupon_use

where (date_format(get_time,'%Y-%m-%d')='$do_date'

or date_format(using_time,'%Y-%m-%d')='$do_date'

or date_format(used_time,'%Y-%m-%d')='$do_date')"

}

import_order_status_log(){

import_data order_status_log "select

id,

order_id,

order_status,

operate_time

from order_status_log

where

date_format(operate_time,'%Y-%m-%d')='$do_date'"

}

import_activity_order(){

import_data activity_order "select

id,

activity_id,

order_id,

create_time

from activity_order

where

date_format(create_time,'%Y-%m-%d')='$do_date'"

}

import_user_info(){

import_data "user_info" "select

id,

name,

birthday,

gender,

email,

user_level,

create_time,

operate_time

from user_info

where (DATE_FORMAT(create_time,'%Y-%m-%d')='$do_date'

or DATE_FORMAT(operate_time,'%Y-%m-%d')='$do_date')"

}

import_order_detail(){

import_data order_detail "select

od.id,

order_id,

user_id,

sku_id,

sku_name,

order_price,

sku_num,

od.create_time

from order_detail od

join order_info oi

on od.order_id=oi.id

where

DATE_FORMAT(od.create_time,'%Y-%m-%d')='$do_date'"

}

import_payment_info(){

import_data "payment_info" "select

id,

out_trade_no,

order_id,

user_id,

alipay_trade_no,

total_amount,

subject,

payment_type,

payment_time

from payment_info

where

DATE_FORMAT(payment_time,'%Y-%m-%d')='$do_date'"

}

import_comment_info(){

import_data comment_info "select

id,

user_id,

sku_id,

spu_id,

order_id,

appraise,

comment_txt,

create_time

from comment_info

where date_format(create_time,'%Y-%m-%d')='$do_date'"

}

import_order_refund_info(){

import_data order_refund_info "select

id,

user_id,

order_id,

sku_id,

refund_type,

refund_num,

refund_amount,

refund_reason_type,

create_time

from order_refund_info

where

date_format(create_time,'%Y-%m-%d')='$do_date'"

}

import_sku_info(){

import_data sku_info "select

id,

spu_id,

price,

sku_name,

sku_desc,

weight,

tm_id,

category3_id,

create_time

from sku_info where 1=1"

}

import_base_category1(){

import_data "base_category1" "select

id,

name

from base_category1 where 1=1"

}

import_base_category2(){

import_data "base_category2" "select

id,

name,

category1_id

from base_category2 where 1=1"

}

import_base_category3(){

import_data "base_category3" "select

id,

name,

category2_id

from base_category3 where 1=1"

}

import_base_province(){

import_data base_province "select

id,

name,

region_id,

area_code,

iso_code

from base_province

where 1=1"

}

import_base_region(){

import_data base_region "select

id,

region_name

from base_region

where 1=1"

}

import_base_trademark(){

import_data base_trademark "select

tm_id,

tm_name

from base_trademark

where 1=1"

}

import_spu_info(){

import_data spu_info "select

id,

spu_name,

category3_id,

tm_id

from spu_info

where 1=1"

}

import_favor_info(){

import_data favor_info "select

id,

user_id,

sku_id,

spu_id,

is_cancel,

create_time,

cancel_time

from favor_info

where 1=1"

}

import_cart_info(){

import_data cart_info "select

id,

user_id,

sku_id,

cart_price,

sku_num,

sku_name,

create_time,

operate_time,

is_ordered,

order_time

from cart_info

where 1=1"

}

import_coupon_info(){

import_data coupon_info "select

id,

coupon_name,

coupon_type,

condition_amount,

condition_num,

activity_id,

benefit_amount,

benefit_discount,

create_time,

range_type,

spu_id,

tm_id,

category3_id,

limit_num,

operate_time,

expire_time

from coupon_info

where 1=1"

}

import_activity_info(){

import_data activity_info "select

id,

activity_name,

activity_type,

start_time,

end_time,

create_time

from activity_info

where 1=1"

}

import_activity_rule(){

import_data activity_rule "select

id,

activity_id,

condition_amount,

condition_num,

benefit_amount,

benefit_discount,

benefit_level

from activity_rule

where 1=1"

}

import_base_dic(){

import_data base_dic "select

dic_code,

dic_name,

parent_code,

create_time,

operate_time

from base_dic

where 1=1"

}

case $1 in

"order_info")

import_order_info

;;

"base_category1")

import_base_category1

;;

"base_category2")

import_base_category2

;;

"base_category3")

import_base_category3

;;

"order_detail")

import_order_detail

;;

"sku_info")

import_sku_info

;;

"user_info")

import_user_info

;;

"payment_info")

import_payment_info

;;

"base_province")

import_base_province

;;

"base_region")

import_base_region

;;

"base_trademark")

import_base_trademark

;;

"activity_info")

import_activity_info

;;

"activity_order")

import_activity_order

;;

"cart_info")

import_cart_info

;;

"comment_info")

import_comment_info

;;

"coupon_info")

import_coupon_info

;;

"coupon_use")

import_coupon_use

;;

"favor_info")

import_favor_info

;;

"order_refund_info")

import_order_refund_info

;;

"order_status_log")

import_order_status_log

;;

"spu_info")

import_spu_info

;;

"activity_rule")

import_activity_rule

;;

"base_dic")

import_base_dic

;;

"first")

import_base_category1

import_base_category2

import_base_category3

import_order_info

import_order_detail

import_sku_info

import_user_info

import_payment_info

import_base_province

import_base_region

import_base_trademark

import_activity_info

import_activity_order

import_cart_info

import_comment_info

import_coupon_use

import_coupon_info

import_favor_info

import_order_refund_info

import_order_status_log

import_spu_info

import_activity_rule

import_base_dic

;;

"all")

import_base_category1

import_base_category2

import_base_category3

import_order_info

import_order_detail

import_sku_info

import_user_info

import_payment_info

import_base_trademark

import_activity_info

import_activity_order

import_cart_info

import_comment_info

import_coupon_use

import_coupon_info

import_favor_info

import_order_refund_info

import_order_status_log

import_spu_info

import_activity_rule

import_base_dic

;;

esac说明1:

[ -n 变量值]判断变量的值,==是否为空==- 变量的值,非空,返回

true - 变量的值,为空,返回

false

- 变量的值,非空,返回

- 修改脚本权限

chmod 777 mysql_to_hdfs.sh- 初次导入

mysql_to_hdfs.sh first 2020-03-10- 每日导入

mysql_to_hdfs.sh all 2020-03-115.1 项目经验

- Hive 中的

Null在底层是以“\N”来存储,而MySQL 中的Null在底层就是Null,为了保证数据两端的一致性。在导出数据时采用--input-null-string和--input-null-non-string两个参数。导入数据时采用--null-string和--null-non-string。

One comment

主题紧扣时代脉搏,具有现实意义。